(satire) *Stephen Miller Reminds Picky-Eater Son That There Starving Kids In Basement.*

The campaign for the right to die, and to assistance in dying, continues in the UK, though limited to those who are expected to die anyway within a short time.

Some well-known LLMs have been proved to be able to deliver up nearly complete copies of the text of some well-known books.

They may, as a result, be found to infringe the copyright on those books.

Precisely why and how this happens is a factual question, but this article does not tell us the answer. In particular, it does not prove that their developers intentionally and specifically stored large parts of any specific book's text verbatim. It could be that the writing style of that book is so distinctive that continuing repeatedly from any portion of the book always finds the text that comes next in the book.

A couple of years ago I heard that someone had made Copi(a)lot reproduce the whole text of the GNU GPL version 3 that way. GitHub surely did not intend for it to do that! And, of course, it omitted the crucial license notice which ought to say that the program is released under the GNU GPL, version 3 or later.

Matt Stoller thinks Google's next goal is to control nearly all prices based on collecting personal information about nearly all purchases and personal preferences.

See https://www.consumerreports.org/money/questionable-business-practices/instacart-ai-pricing-experiment-inflating-grocery-bills-a1142182490/ and https://substack.com/redirect/d27787e6-e804-4231-8f23-2eaf0e1bc652?j=eyJ1IjoiMmRjd2YyIn0.m51z6BBZ0nK06POYEEH_mMhm8t1iRiokalBUx8IccKE

It is unfortunate that Stoller effectively boosts this scheme by describing it with the term "artificial intelligence".

The rulers of China care only a little human rights, but the current rulers of the US have ceased to raise the issue because they care even less.

Describing what Massachusetts has done, and is considering doing, to protect immigrants from the federal deportation thugs and stop the latter from causing them gratuitous and avoidable problems.

Some members of SCROTUS publicly spread hatred of Muslims.

The world's main religions — Christianity, Hinduism, Islam and Judaism — have all displayed in recent years the potential to persecute unbelievers. Right-wing fanatics try to stir up the hatred to the point of killing. However, in each of those religions, most believers do not want to kill and can live in peace with other groups. The crucial thing is to reject the right-wing killers.

Budget cuts and direct cancellation of specific programs have left the US unprepared for new pandemics.

Both the saboteur in chief and the saboteur of health have pushed to create these new problems.

Many US universities are thinking about themselves as businesses and judging their activities in terms of cost and profit.

The idea that an educated populace is important is being discarded.

Besides, why bother studying what people have written, or art they have made, when de-generative supposed intelligence can produce it faster?

Where the Deform Party won election to local government, they will use local government power to spread persecution and fear.

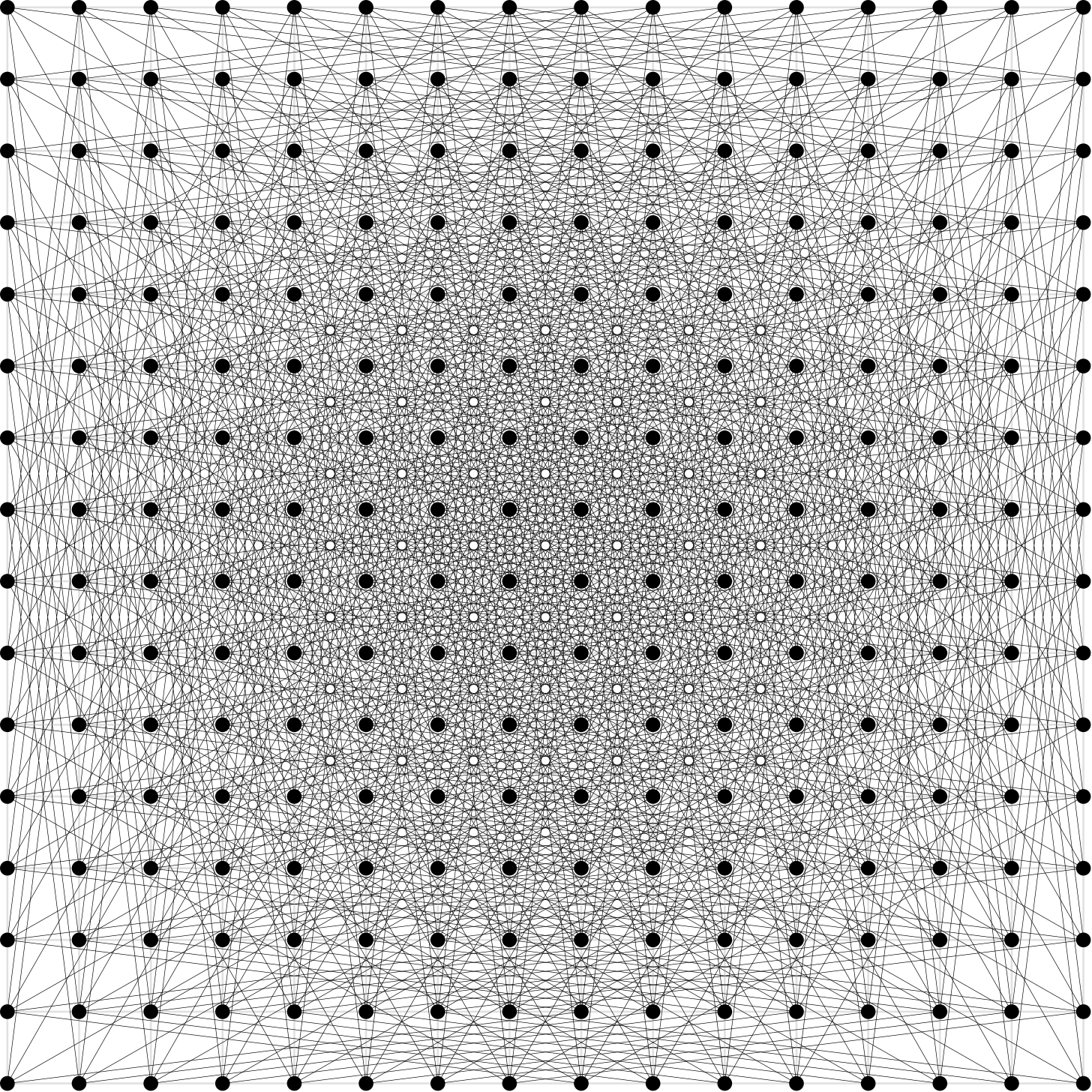

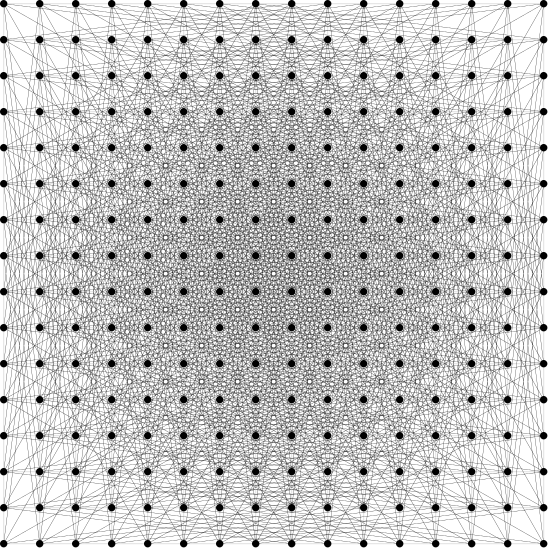

There’s a new math result which is a milestone for AI mathematics. It’s a human readable and insightful result on a conjecture of some renown. It improves on a previous construction of Erdos to make a set of points in the plane with a relatively large number of unit distances between them.

Where the AI got its inspiration from can be as ineffable as it is for humans, but there’s a plausible narrative that it got direct inspiration from the Erdos construction. A proof tells a story, and the moral of the story belongs to the reader not the storyteller. To some the Erdos construction is a story about square grids. But it can also be read as a story about taking an algebraic construction, finding a projection onto geometric space which preserves unit distances, and then solving a number theory problem in the algebraic space to have lots of unit distances. Instead of using the straightforward grid structure the new construction uses a more esoteric algebraic construction, involving pulling in a powerful theorem from a completely different place. In a funny detail the underlying number theory problem it relies on is fairly trivial while the Erdos one requires some work. That is not coincidental with there being a lot more edges: the requirements for them to work are much less stringent.

The obvious question is: What does it look like? The papers and articles contain no pictures of the new construction and there’s a reason for that but another reason one should be included anyway. The construction used for small examples produces some very tesseract-looking things and at larger scales looks like a point cloud without any obvious nice geometric properties. At the smaller scale where the structure can be gleaned it looks actively counterproductive, producing fewer distance coincidences than the Erdos construction. You have to crank up the number of dimensions and the radius of the ball up quite a bit before it starts getting favored, and by then the number of points has become huge.

But that doesn’t mean there can’t be a picture! You can have a density plot where regions with more points points are darker, and having the picture may yield geometric insights which the algebraic construction was obfuscating. Does it look like the shadow of a sphere? A disc? A Gaussian plot? Whatever the shape is, the next question is: How big is the unit distance compared to the width of the shape? Here is where it gets interesting: It appears to be that the distance is quite small. For me that starts raising alarm bells. Didn’t we already crop to within a ball in the algebraic construction? Yes we did, but that was to make the number of points finite, not to reduce the geometric range. The projection between the algebraic and geometric space makes many things look very different with the one exception that certain exactly unit distances stay unit. Other distances get scrambled. So that raises the next question: Why can’t we just crop geometrically to some small constant factor of the unit distance at the end, thus making a much better result by reducing the denominator? This might actually work! It depends on just how much smaller the cropping is and how sparse of a region can be found. I honestly don’t know if it works out, and don’t have the tools to analyze this because it’s a bizarre jump back into geometric space from algebraic but it’s plausible and the benefits might be big, so it’s certainly worthy of further analysis.

The concrete bounds now stand at there being a lower bound on the polynomial exponent of 1.014, up from the previously conjectured to be optimal value of 1. The known upper bound is 4/3. That range of possibilities is very interesting and we most definitely have not heard the last word on this. The AI construction just showed 1+e and the 1.014 is a later explicit improvement. Maybe there will be a polymath project on it.

Talking to AI (specifically Opus 4.7) about this is very interesting. It can read through the whole construction no problem, and talk about it fluently. But then when it gets into discussing geometric insights its intuition is garbage. With some prodding I can get it to understand basic points, and it readily understand after they’re pointed out that these are very basic things, but it just can’t wrap its brain around anything without having it explained. It seems like the new construction is exactly the thing it happens to be super good at: Tackling something purely symbolically, pulling in outside theorems and constructions from seemingly totally unrelated areas, following a roadmap which had already been laid out for it. Drawing from geometric intuitions is something which it simply can’t do. The contrast is very bizarre in this particular case where it’s going from genius to idiot talking about the exact same problem with the perspective shifted only slightly. I haven’t, and probably won’t, grok the full new construction, but it was able to explain the basics outline of the construction to me and construct some basic examples, which was fun and interesting.

The other notable thing about the AI strength here is that this is a constructive proof. AI seems to be better at that than proofs of nonexistence, which is consistent with it being fast and not having much insight. Constructions require fiddling around until you find something, with much clearer partial results along the way, where with proofs of non-existence you have to intuit a roadmap or you don’t make any obvious headway until the very end. The proof of the Robbins conjecture is similar: The core insight is up front realizing that you can find a counterexample to Modus Tollens and then do proof by contradiction. After that it looks a lot more like finding a solution to a post substitution problem than a meaningful proof.

WP 7.0 was just released and apparently this is the "AI" release. Is there a patch to excise this cancer from core, or is there a bugfix-tracking fork that I should switch to instead, or should I just never upgrade again, or what?

WP 7.0 was just released and apparently this is the "AI" release. Is there a patch to excise this cancer from core, or is there a bugfix-tracking fork that I should switch to instead, or should I just never upgrade again, or what? Previously, previously, previously, previously, previously, previously.

I thought: enough is enough, I need to figure out what clown service these are coming from and start blocking whole networks.

Nope, they're almost all from cable modems, not from hosting facilities:

49.43.169.105 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 49.36.222.165 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 49.207.199.34 "24309 | IN | apnic | 2004-12-08 | CABLELITE-AS-AP Atria Convergence Technologies Pvt. Ltd. Broadband Internet Service Provider INDIA, IN" 60.254.88.85 "17488 | IN | apnic | 2000-11-28 | HATHWAY-NET-AP Hathway IP Over Cable Internet, IN" 14.176.188.15 none 113.181.115.52 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 222.252.180.57 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.191.68.81 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 203.162.75.165 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.166.25.40 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.234.181.48 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.232.16.94 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.226.156.45 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.186.56.201 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.27.88.193 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.24.165.158 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.23.84.13 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.173.75.211 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.254.59.191 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.247.93.88 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 134.122.23.167 "14061 | US | arin | 2012-09-25 | DIGITALOCEAN-ASN - DigitalOcean, LLC, US" 113.184.185.188 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.170.114.205 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.236.40.167 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.170.244.63 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.165.28.93 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 163.223.48.0 "138797 | IN | apnic | 2019-02-11 | COASTAL-AS-IN Coastal Broadband And Online Services Pvt. Ltd., IN" 58.249.137.74 "17622 | CN | apnic | 2001-01-18 | CNCGROUP-GZ China Unicom Guangzhou network, CN" 39.68.1.38 "4837 | CN | apnic | 2001-09-17 | CHINA169-BACKBONE CHINA UNICOM China169 Backbone, CN" 14.191.63.98 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.168.114.54 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.161.135.60 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.228.183.221 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.177.130.34 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.236.8.100 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 144.31.35.211 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 14.230.66.236 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.166.3.113 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 143.20.253.201 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 14.172.46.240 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.191.87.248 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.240.145.79 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 171.250.165.14 "7552 | VN | apnic | 2002-10-08 | VIETEL-AS-AP Viettel Group, VN" 14.230.54.62 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.180.27.31 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 177.137.214.144 "263087 | BR | lacnic | 2012-04-18 | Rawnet Informatica LTDA, BR" 120.140.8.61 "4788 | MY | apnic | 1996-10-20 | TTSSB-MY TM TECHNOLOGY SERVICES SDN. BHD., MY" 49.37.213.156 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 216.75.132.173 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 144.31.35.77 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 216.75.132.173 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 216.75.132.173 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 144.31.35.77 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 144.31.35.77 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 216.75.132.173 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 216.75.132.173 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 143.20.253.246 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 143.20.253.246 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 14.169.142.16 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.190.135.174 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.165.212.210 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.165.39.79 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.254.166.139 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.170.190.94 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.31.236.195 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.175.2.197 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.245.142.32 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.168.130.126 none 14.234.120.102 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.187.7.237 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.169.200.172 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.187.7.158 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 222.253.132.0 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.179.243.6 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.189.84.206 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.189.125.112 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.27.175.238 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.179.139.90 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 216.75.132.167 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 216.75.132.167 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 14.191.192.133 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 216.75.132.212 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 207.180.11.239 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 207.180.11.239 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 113.186.16.134 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.170.25.114 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.229.9.235 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.188.28.166 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 123.16.224.122 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.173.194.120 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.239.74.25 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.239.32.210 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 14.177.207.17 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 113.173.255.127 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 144.31.35.232 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US" 181.213.41.191 "28573 | BR | lacnic | 2003-11-27 | Claro NXT Telecomunicacoes Ltda, BR" 113.182.199.97 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 88.235.232.128 "9121 | TR | ripencc | 1998-12-29 | TTNET, TR" 181.191.153.5 "267488 | BR | lacnic | 2017-08-17 | Pronet Construcoes LTDA, BR" 211.241.113.62 "9316 | KR | apnic | 1998-06-03 | DACOM-PUBNETPLUS-AS-KR DACOM-PUBNETPLUS, KR" 152.58.183.66 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 59.184.62.53 "9829 | IN | apnic | 2000-01-19 | BSNL-NIB National Internet Backbone, IN" 14.164.166.200 "45899 | VN | apnic | 2009-08-28 | VNPT-AS-VN VNPT Corp, VN" 152.57.115.213 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 49.34.210.1 "55836 | IN | apnic | 2010-10-27 | RELIANCEJIO-IN Reliance Jio Infocomm Limited, IN" 1.246.164.57 "9318 | KR | apnic | 1998-06-03 | SKB-AS SK Broadband Co Ltd, KR" 31.223.92.91 "12735 | TR | ripencc | 1999-10-18 | ASTURKNET, TR" 103.66.177.245 "135578 | BD | apnic | 2016-06-18 | DIT-AS-AP Muhammad Nasir ta Dhaka Information Technology, BD" 152.42.242.171 "14061 | US | arin | 2012-09-25 | DIGITALOCEAN-ASN - DigitalOcean, LLC, US" 143.20.253.31 "401560 | US | arin | 2025-01-03 | ONECABLE - OneCable Network LLC, US"

"and the question every CEO eventually has to answer: who's next?"

Previously, previously, previously, previously, previously, previously, previously, previously, previously, previously, previously.

I weep for the future.

This document establishes a policy for how LLMs can be used when contributing to rust-lang/rust. [...]

No comment on this PR may mention the following topics:

- Long-term social or economic impact of LLMs

- The environmental impact of LLMs

- Anything to do with the copyright status of LLM output

- Moral judgements about people who use LLMs

We have asked the moderation team to help us enforce these rules.

Previously, previously, previously, previously, previously, previously, previously, previously.

Penile implant specialist with history of far-right comments led hantavirus presser:

Before he joined the Trump administration last year, Dr. Brian Christine was an Alabama-based urologist who specialized in penile implants. He has little public health experience and a history of far-right commentary and promoting conspiracy theories. He's said the Covid pandemic led to a wider government plot to control people, compared the Biden administration to Nazi Germany and suggested the Covid vaccine had little effect in stopping the pandemic.

He once hosted a YouTube show called "Erection Connection," a professional YouTube series on erectile dysfunction for fellow urologists.

A CNN review of archived podcast episodes, social media posts and radio appearances found that Christine repeatedly framed public health institutions, the federal government and pandemic-era policies as tools used to target conservatives and religious Americans.

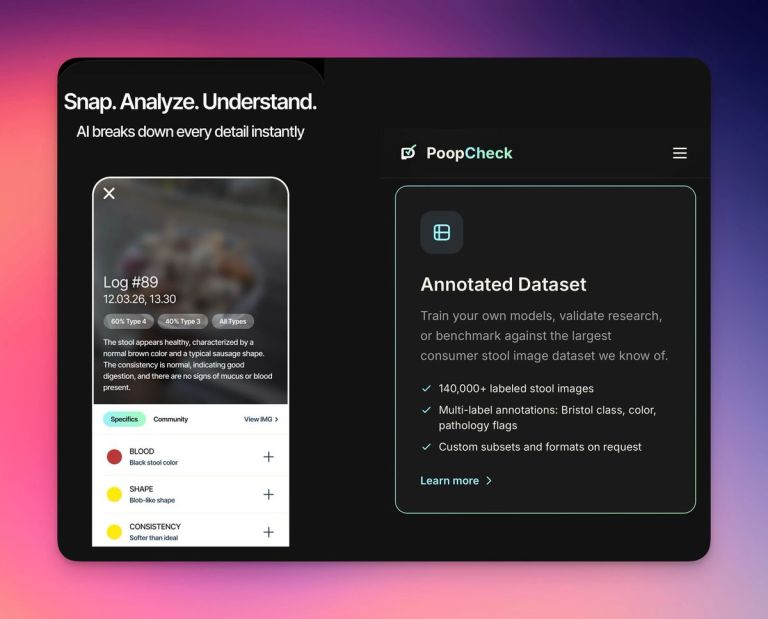

The post was advertising exactly what it sounds like: A database of poop images, collected from an AI poop analyzing app that he had launched several years ago. Basically, 25,000 people had been taking images of their poop and uploading them to his app. He'd been collecting, analyzing, and annotating these images and now wanted to sell access to them. [...]

The poop database comes from an app called PoopCheck, an app made by a company called Soft All Things that purports to use AI to analyze images of one's stool in order to give you a "daily gut health score." [...]

The app also features a "community," of 151,317 "shared stools" at the time of this writing and a "leaderboard," where people can share images of their poop for commentary from other users and earn points for participating.

Previously, previously, previously, previously, previously, previously.

Planet Debian upstream is hosted by Branchable.